If you’re still on the fence whether mobile VR can meet the standards of high-end tethered HMDs, just stop it. It’s time to come down.

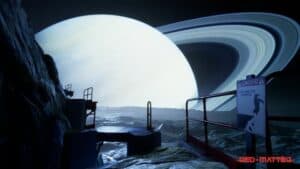

Vertical Robot’s port of Red Matter on Oculus Quest is a stunning achievement. Whatever you think of the actual gameplay (we’re not fans of puzzles but we loved it), the visuals are breathtaking. We find ourselves going back to the game again and again just to observe the shadows and reflections.

Here’s a quick view of the amazing visual quality.

This popular retro Cold War puzzle game has a lot going for it. Add in the stunning visuals and you have to remind yourself that a Smartphone processor is powering the entire experience. At times, you feel like an Nvidia 1080 is behind the graphics – it’s that good. But in truth, it’s just the beginning of where virtual reality is headed.

Red Matter on Oculus Quest

For details on how the port to Oculus Quest was pulled off, see UpLoadVR’s interview with Norman Schaar, Vertical Robots co-founder, and technical artist. We’ve reproduced a portion here, but it’s worth reading in full.

UploadVR: Approximately how long has the Quest port taken?

Norman Schaar: We started working on the port right around the time we shipped Red Matter for PSVR back in December. So it must have been around 8 months for a 2 person team. It’s a bit hard to tell as we have other company-related matters to attend to, which have certainly robbed us of some time. That being said, also quite a fair bit of overtime went into the port so we could ensure Red Matter would release on Quest sooner rather than later.

UploadVR: Why was it important for you to set this standard for Red Matter’s visuals on Quest?

NS: We found ourselves in a very similar situation 2 years ago when we started the development of Daedalus for GearVR. We were looking at the landscape of GearVR games and realized that most games at that time were extremely simple flat shaded games. We can only speculate as to why that was, but it certainly isn’t a hardware limitation for the most part. Although thermal limits on GearVR was something to keep in mind.

Having had experience with both AAA console games and mobile games, we simply knew we could do better. There are certain effects that we pulled off years ago on an iPad 2, so it had to be possible on GearVR and Quest for that matter. Better graphics on mobile VR are absolutely possible with the proper time and know-how. A lot of old tricks can be used, but we also developed new ones along the way.

Visuals are definitely one of our strong suits. It’s one of the aspects we have a lot of experience in, so it made a lot of sense to make use of that skill set here. Especially in mobile VR were great graphics seem somewhat lacking.

We also like to argue that better graphics enhance immersion. A lot of our improvements focus on the notion of providing additional depth cues to the player. Parallax corrected reflections, the raytraced laser reflection, multiple point light reflections. All these add layers of depth to the image and help you better understand the depth in the world. This is especially true nowadays, where we don’t have varifocal displays with proper image defocusing. Any depth cue that you can add will help, even if the player only realizes on a subconscious level.

The team put aside the easy solution of using optimization algorithms and instead manually reduced the polycount for each mesh. That – and the fact earlier versions of the Quest firmware were less stable – made for an extremely time-consuming development process. If that’s not enough, the team wrote custom shaders instead of using the ones that come with Unreal Engine.

But you see the payoff as soon as you step into the game. And the market bears it out – sales of Red Matter on the Quest surpassed the Rift by the third week of August.

Visual Compromises, of Course

Don’t get us wrong, not everything is perfect in Red Matter. The graphics drain the battery far more than most other experiences on the Quest. And it uses a form of foveated rendering which lowers the visual quality around the periphery of your gaze. In itself, that’s fine and it will soon be a solution all HMDs use once we have inexpensive eye-tracking. But shift your gaze in Red Matter and you’ll see visual artifacts.

Yes, it’s better on a tethered HMD, but it’s still astonishing for a mobile headset. And the graphics will only get better in the future.

Movement and Controls

Teleporting through the game is a relatively standard process of an arc and thumbstick. But you move slowly (simulating the experience of a low-gravity planet) so there’s less possibility of becoming nauseous. Besides, you’re almost always in a series of smaller rooms that make for stable reference points.

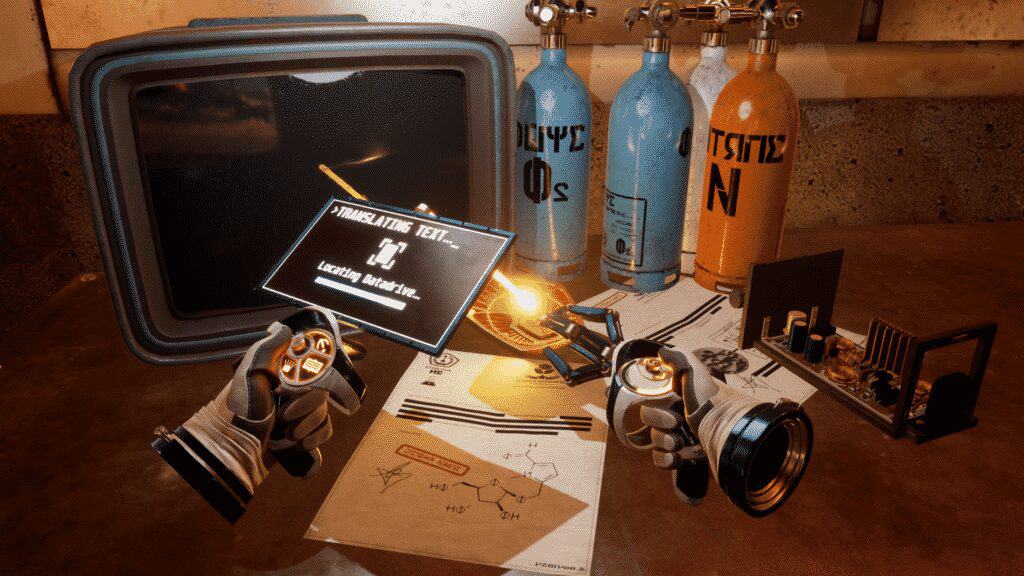

Everything is done through the new Touch controllers with the Oculus Quest. Your robotic left hand can morph into several tools, including decoder/translator to help understand clues in the game. Some of the clues are essential for solving the puzzles; others just add to the realism of a now-abandoned space station. Sometimes the most effective details don’t relate to the narrative. They just contribute to the context.

In porting Red Matter on Oculus Quest, the freedom of the tetherless HMD is used to full advantage. You can freely move around – just as much as your Guardian area will allow.

Oculus, are you listening? It may be time to offer the option of larger spaces.

What’s Coming Next

When the HMD was announced last year, there were initial concerns about visual quality. We were just finally getting free (well, almost free) of the screendoor effect. Red Matter makes it clear that the Quest can hold its own with any headset.

Over the past year, Facebook’s new headset has rejuvenated virtual reality and set it on its way to the future. When you experience Red Matter on Oculus Quest, you’re catching a glimpse of a world to come, a world where reflections and shadows undermine our standard conceptions of what is real and what is only virtual.

And with the Oculus Connect conference only a few weeks off, there’s much more to come.

Emory Craig is a writer, speaker, and consultant specializing in virtual reality (VR) and generative AI. With a rich background in art, new media, and higher education, he is a sought-after speaker at international conferences. Emory shares unique insights on innovation and collaborates with universities, nonprofits, businesses, and international organizations to develop transformative initiatives in XR, GenAI, and digital ethics. Passionate about harnessing the potential of cutting-edge technologies, he explores the ethical ramifications of blending the real with the virtual, sparking meaningful conversations about the future of human experience in an increasingly interconnected world.