OpenAI announced the release of GPT-4, its new state-of-the-art multimodal AI that will disrupt multiple areas of society. It’s a significant advance and reveals how rapidly AI is developing. As we’ve said before, this is only the beginning of the AI revolution – despite its six-decade-long history. OpenAI claims the new version will be more accurate, creative, and collaborative. And, of course, it will up the stakes for the ethical challenges we face in integrating AI into how we learn, work, and interact with each other.

In short, our world is about to change – profoundly.

A Developer Livestream from today, March 14, 2023, is still available with Greg Brockman, President and Co-Founder of OpenAI, showcasing GPT-4 and some of its capabilities/limitations.

GPT-4 Details

As the latest large language model (LLM) from OpenAI, GPT-4 (Generative Pre-Trained Transformer 4) inches tantalizingly close to human-like performance across multiple benchmarks. OpenAI describes GPT-4 as follows:

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while less capable than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks. For example, it passes a simulated bar exam with a score around the top 10% of test takers; in contrast, GPT-3.5’s score was around the bottom 10%. We’ve spent 6 months iteratively aligning GPT-4 using lessons from our adversarial testing program as well as ChatGPT, resulting in our best-ever results (though far from perfect) on factuality, steerability, and refusing to go outside of guardrails.

Let’s run through some of the advances:

- Multimodality: Besides taking text input (or prompts), GPT-4 can source and manage information from various sources, including images, videos, and music.

- Higher capacity: GPT-4 has a greater capacity for processing and retaining information, which enables it to provide more accurate answers and perform more complex tasks.

- Faster Response Times: Previous versions of GPT could be slow, as you may have experienced trying to use ChatGPT.

- Improved human-like responses: GPT-4 can generate more human-like responses, providing a more seamless user experience that will revolutionize the use of chatbots.

- Human-like Performance: GPT-4 is bridging the gap between AI and human interaction, generating responses that feel more authentic. While previous versions of GPT could pass some academic exams, GPT-4 can ace many standardized tests.

The new model can handle more than 25,000 words of text, a huge leap forward from the 500-word limit of ChatGPT (though people have discovered workarounds). The expanded text capacity will facilitate longer conversations and allow users to analyze much longer documents.

The Impact of Education And Other Areas Will Be Dramatic

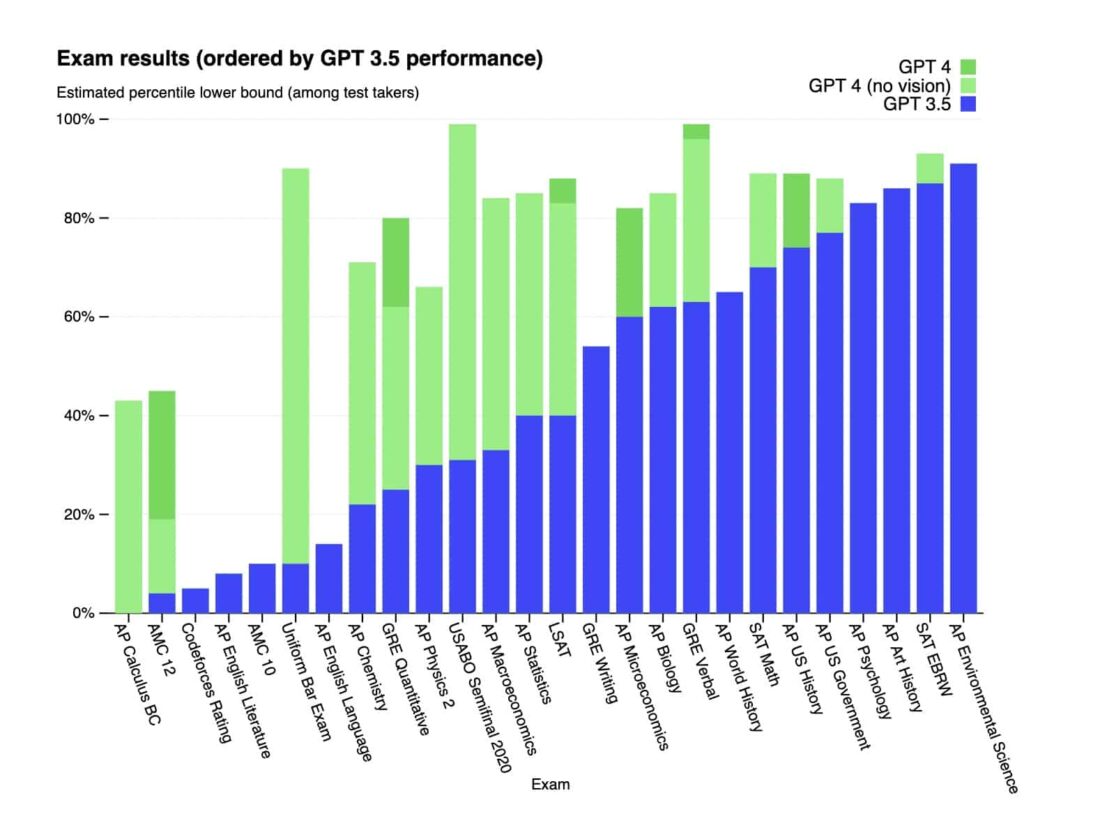

We’ll need to play around with GPT-4 to understand its full impact, but OpenAI has already revealed its test-taking ability. For example, ChatGPT was able to pass the Uniform Bar Exam but came in at the bottom 10%. GPT-4 wiped out that dismal result, now posting a score in the top 10% of test-takers. It also scored in the 88th percentile or above on the LSAT, SAT Math, and SAT Evidence-Based Reading & Writing exams.

OpenAI’s chart shows how GPT-4 handles standardized tests compared to GPT-3.5.

Those results will be a watershed moment for schools, universities, and other learning organizations relying on standardized testing. However, where those tests still need to be used, it will undoubtedly require leaving smartphones outside the testing facility.

But the impact will go far beyond standardized test taking. GPT-4 will significantly improve over ChatGPT in writing college-level essays and other academic work.

Limitations and Safety Challenges of GPT-4

Even though GPT-4 is a significant advance over previous versions, it still comes with limitations that parallel what we’ve encountered in the earlier models. While it makes fewer mistakes, it will still “hallucinates” facts (as OpenAI like to phrase it), making up cogent responses that have no basis in reality. Though it is claimed to be 40% more accurate, OpenAI cautions that care needs to be taken, especially in high-stakes outputs.

It also raises significant safety challenges, which OpenAI has endeavored to mitigate in releasing the new version. Some of the specific risks explored include:

- Hallucinations

- Harmful content

- Harms of representation, allocation, and quality of service

- Disinformation and influence operations

- The proliferation of conventional and unconventional weapons

- Privacy

- Cybersecurity

- Potential for risky emergent behaviors

- Economic impacts

- Acceleration

- Overreliance

One of the problematic areas where the company held back is in the new image capabilities of GPT-4. It has limited the release of the image feature due to concerns about abuse, and the version available now offers only offers text output. Currently, GPT-4’s image input capability is being tested with a single partner, Be My Eyes, a smartphone app that can recognize and describe a scene.

The stakes will be higher now that the public is gaining access. And while some mitigation efforts depend entirely on OpenAI’s own work (harmful content, privacy), other areas are not entirely under their control (economic impacts, overreliance). We’ll be following these closely over the coming months.

Getting Access

You’ll get immediate access to GPT-4 if you subscribe to ChatGPT+, OpenAI’s $ 20-a-month premium subscription. You can also join a Waitlist, which will eventually give you access though you’ll have to list some of your proposed use cases for the new multimodal AI model. This will only grant API access and not access to the fully-finished product. The easiest route for free access is through Microsoft’s Bing, with Microsoft’s surprise announcement that Bing Chat has been running on GPT-4 all along.

The AI revolution is now in full swing, and we will gain access to incredible opportunities and face profound challenges in every area of human life and society.

Welcome to the future.

Emory Craig is a writer, speaker, and consultant specializing in virtual reality (VR) and generative AI. With a rich background in art, new media, and higher education, he is a sought-after speaker at international conferences. Emory shares unique insights on innovation and collaborates with universities, nonprofits, businesses, and international organizations to develop transformative initiatives in XR, GenAI, and digital ethics. Passionate about harnessing the potential of cutting-edge technologies, he explores the ethical ramifications of blending the real with the virtual, sparking meaningful conversations about the future of human experience in an increasingly interconnected world.