A brain-computer interface for AR? It could happen sooner than you think. The Verge’s Casey Newton got hold of a leaked audio transcript of internal meetings at Facebook where the question came up. In one section, Mark Zuckerberg talked about the possibilities of brain-computer interface (BCI) for augmented reality devices.

Without a screen, input remains a fundamental challenge for AR Glasses. Tapping the side of an eyeglass frame or using voice commands (especially in crowded environments) won’t make your smart glasses seem very smart. If you could think and have a command execute, you solve a host of problems.

And create some fascinating new ones.

The question then becomes: do you want a tech company – Facebook or another one – inside your head? I dread the idea of even trying to read the terms of service for this – not that anyone ever does.

But our TOS literary block will only make our decisions that much more interesting.

Two Approaches to BCI

There are two solutions to brain-computing interfaces – invasive and noninvasive. The latter is easier to implement and will generate less backlash. In a way, we’re already taking steps toward it with the data that Alexa and other devices gather from our lives. Honestly, the public might embrace it simply because we would get something in return for the loss of privacy – command and control of our AR (and possibly VR) tech devices.

Whether or not we go the invasive route depends on a host of factors, including public acceptance. Step outside the diehard tech community and it’s a difficult bridge to cross. For some, it may be like nuclear weapons, a place where we draw the line. On the other hand, we could see it like nuclear power, where many conclude that the benefits outweigh the consequences.

Similar technology, just different questions. Do we want to heat our homes? Or blow up the world?

All we know is that our current debates over privacy will look like child’s play compared to the issues we will face in the not-so-distant future.

Neralink’s Invasive Solution

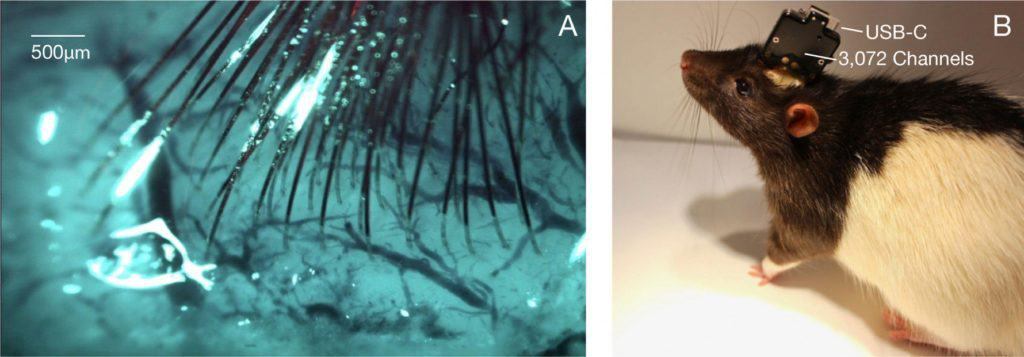

The brain-computing interface for AR came up in the meeting with a question over the developments at Neuralink, the company founded by Elon Musk and others in 2016. Neuralink is small – minuscule by Facebook standards – only 90 employees. But they’ve drawn high-profile neuroscientists and claim to have a BCI solution (extremely thin threads implanted in the brain) that will be ready for human experiments in 2020.

Of course, Elon Musk is notorious for making grandiose promises. But never underestimate the man. The major challenge for Neuralink is not getting the threads into the brain, but keeping the brain from rejecting them (our bodies are a little overly protective of critical organs). As the MIT Technology Review noted,

How long will an implant last? This could be the bugbear for Neuralink. While thin, flexible electrodes could last longer and cause less damage, reliability is a serious problem inside the brain, and electrodes cause tissue damage called gliosis. According to a tweet thread by Jacob Robinson, a professor at Rice University, Musk himself said the problem is “definitely not solved.” Robinson noted that it’s hard to speed up testing in animals of how long different electrode materials perform. Time doesn’t pass faster, even for billionaires.

Though it’s a different topic entirely, that last comment bears reading twice.

Neuralink did a part-marketing, part-technical rollout this past July and unveiled a sewing-machine-like robot that would do the implants. Sounds easy enough. But there is still a long way to go with the threads-in-our-skulls solution even if one can surmount public opposition to a “minimally invasive” procedure.

Side note: I’m wondering how my hairdresser would cope with this solution. Please don’t disable my AR Glasses.

Zuckerberg is smart enough to see that a noninvasive approach for smart glasses is easier in both the technical and public arenas. As he noted, he has no desire to be part of Congressional hearings on BCI solutions. We can’t fathom how that would unfold given the last round over social media and iPhones.

Facebook’s Noninvasive BCI Project

Facebook has been working on its noninvasive approach for years with an initial announcement at F8 in 2017. But the pace picked up this year. Right before OC6 last week, they bought CRTL-Labs, which is working on a wristband that translates signals from the brain into computer input. As Google learned with Glass, wearing something on your wrist is a lot easier to stomach than a futuristic device on your head. Since it’s noninvasive, you wouldn’t get the kind of accuracy that Neralink claims to offer. But even executing simple menu commands and virtual typing would revolutionize how we interact with our devices.

CRTL-Labs was picked up for a mere $500 million to $1 billion. Call it a bargain compared to Facebook’s other social and VR acquisitions.

Zuckerberg on Brain-Computer Interfaces for AR

We’ve only pulled the second part of the leaked recordings that cover augmented reality. But the entire transcript is worth reading. Zuckerberg covers a lot more, including his overall control of the company, and Facebook’s public image. And he responds to and somewhat dismisses (as “a little overdramatic”) a matter most of us ignore – PTSD issues of Facebook moderators. There are 30,000 of them, mostly outside contractors.

What was Zuckerberg’s reaction to the leaked transcript of the internal meeting? He promoted the link on Facebook. It was an eat your own dogfood moment, though we’re sure Facebook never wanted this to see the light of day.

On to the transcript.

A company named Neuralink presented on their progress to develop a brain-computer interface, which they plan on human testing starting next year. First, do we have plans to integrate this kind of technology with our VR and AR products? And what do you think of privacy in a world where we could capture purchasing intent and deliver ads using a direct brain link?

Brain-computer interface is an exciting idea. The field quickly branches into two approaches: invasive and noninvasive. Invasive being things that require surgery or implants, but have the advantage that it’s actually in your brain, so you can get more signal. Non-invasive is like, you wear a band or, for glasses, you shine an optical light and get a sense of blood flow in certain areas of the brain. You get less signal from noninvasive.

We’re more focused on — I think completely focused on non-invasive. [laughter] We’re trying to make AR and VR a big thing in the next five years to 10 years … I don’t know, you think Libra is hard to launch. “Facebook wants to perform brain surgery,” I don’t want to see the congressional hearings on that one.

Look, I think it’s good that there’s research. I am very excited about the brain-computer interfaces for non-invasive. What we hope to be able to do is just be able to pick up even a couple of bits. So you could do something like, you’re looking at something in AR, and you can click with your brain. That’s exciting … Or a dialogue comes up, and you don’t have to use your hands, you can just say yes or no. That’s a bit of input. If you get to two bits, you can start controlling a menu, right, where basically you can scroll through a menu and tap. You get to a bunch more bits, you can start typing with your brain without having to use your hands or eyes or anything like that. And I think that’s pretty exciting. So I think as part of AR and VR, we’ll end up having hand interfaces, we’ll end up having voice, and I think we’ll have a little bit of just direct brain … But we’re going for the non-invasive approach, and, actually, it’s kind of exciting how much progress we’re making.

BCI, Spatial Computing, and our Future

As Greenlight Insights noted,

Facebook’s acquisition of CTRL Labs signals an important long-term commitment to pioneering meaningful, next-generation computing devices that bring with them a generational step-change in hardware interfaces and human-computer interaction. Oculus CTO John Carmack took great pains during his OC6 keynote to specify that his vision of VR entails a universal computing platform. Far from being a gaming and entertainment device, VR should enable socialization, productivity, and all the other key functions current computers achieve today.

One way or the other, we’re going down the brain-computer interface path. For Facebook, it’s a logical extension of current advances in the use of hand tracking in VR. One of the best moments of OC6 was Zuckerberg criticizing the current state of VR interfaces. With our immersive devices – the wires and buttons have to disappear.

Yes, all of them.

In the near future, it will be a combination of voice, hands, and brains. Down the road? It would be easy to see voices and hands becoming the extraneous elements, getting in the way of our brain-driven interactions with technology and the world.

It will be one of the paradoxical moments of our future. Instead of struggling to get the technology out of the way in our interfaces, we’ll be asking ourselves to get out of the way.

We’re not sure where we sit in this debate. Just that we’ve seen this coming for some time (hence our name, Digital Bodies). We do know that ethical issues are never well served by instant reactions. But one thing is crystal clear. VR and AR will force us to confront our relationship with our technology, the world, and ourselves. Of course, you can argue that technology has always done this – from the invention of the alphabet, the printing press, the railroads, the telegraph, to the transistor and Digital Revolution.

But the introduction of BCI will pose fundamental questions for our era. And the decisions we make will have profound consequences for our future.

Emory Craig is a writer, speaker, and consultant specializing in virtual reality (VR) and generative AI. With a rich background in art, new media, and higher education, he is a sought-after speaker at international conferences. Emory shares unique insights on innovation and collaborates with universities, nonprofits, businesses, and international organizations to develop transformative initiatives in XR, GenAI, and digital ethics. Passionate about harnessing the potential of cutting-edge technologies, he explores the ethical ramifications of blending the real with the virtual, sparking meaningful conversations about the future of human experience in an increasingly interconnected world.