Meta 2 AR Glasses were announced last week and may well be a preview of our future work and learning environments. The $949 Developer Edition of Meta will not be available until the third quarter of 2016. And for the moment, Meta will still keep you tethered to a high-end computer. So the cost will be more than the actual device – though you could double down and use the computer for that new HTC Vive you want to purchase.

Meta’s approach may not lead the way in entertainment when you can get fully immersive virtual experiences through high-end VR headsets. But along with Microsoft’s HoloLens, it opens up new possibilities in the so-called “mixed reality” area, a space where digital and holographic images are integrated into your environment.

Meta 2 Launch

You can still see the Meta 2 launch video if you missed it. More interesting is an actual demo shot through a the device. This is nicely done without the special camera rig that Microsoft uses to do videos of the HoloLens experience:

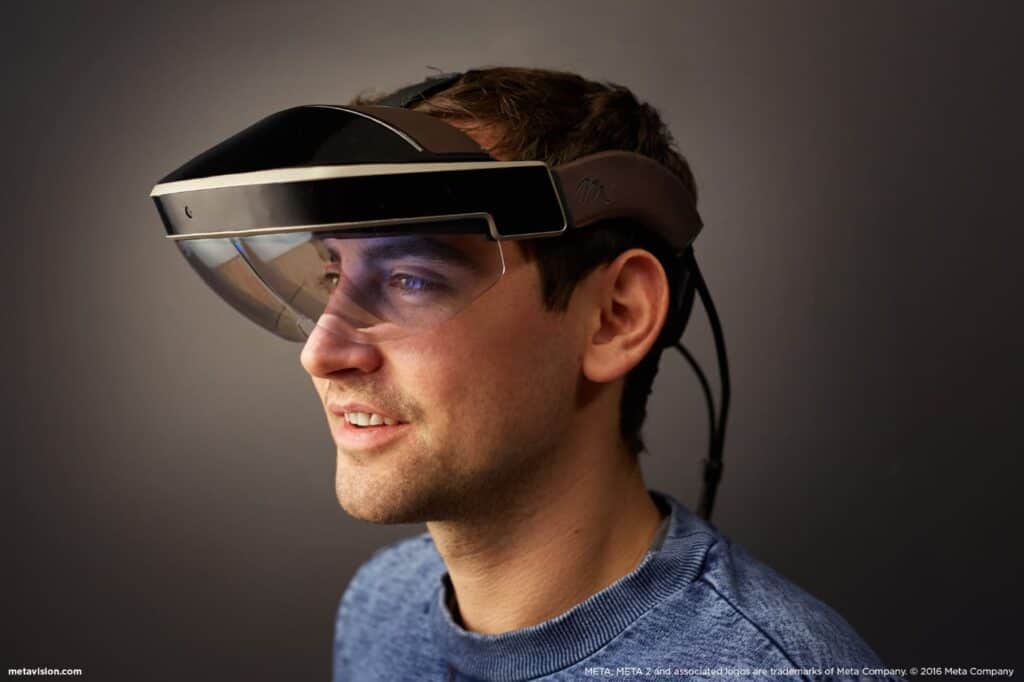

The first version of Meta some years back – born in the Google Glass era – was bulky and obscured your face. The new version looks like a visor, taking an approach which parallels Microsoft’s HoloLens and the DAQRI Smart Helmet. There’s a huge advantage in doing this. While the ultimate goal of smart glasses will be something that looks like our normal eyewear, the technology is not there yet. Expand the device into the size of a headset and you have room for the electronics and the battery.

Maybe it’s just our reaction, but a large visor Meta 2 AR device seems less cyborg-like than the original design of Google Glass. Visors are already used in recreational and work activities (skiing, biking, construction work) and the wide lens doesn’t interfere with eye contact. Even better, you can wear them while keeping your regular glasses on, and that’s an issue for many of us.

Initial Reviews

So far, Meta’s generated lots of positive reviews, such as that of Lucas Matney in TechCrunch who called it “mind-bending”:

The near 90-degree field-of-view on the new Meta 2 is more expansive than anything I’ve seen to date and was sizable enough that everything I was viewing felt like it had visual context and wasn’t just butting into my regularly scheduled line of sight. For comparison’s sake, the Gear VR mobile headset offers 98-degree field-of-view.

We think FOV is a huge factor when it comes to augmented reality. On the other hand, a few reviewers have taken Meta to task for not having the environment mapping and advanced gestural interface found in HoloLens. That room mapping capability means that the holograms in Microsoft’s device stay where you place them in your surrounding space.

Meta 2 AR Collaboration

Like Microsoft, Meta has pushed the collaborative aspect of AR, that two people can use them to simultaneously work on a digital object. At the moment, Microsoft may have the lead on this since you can collaborate with someone using HoloLens without having to wear one yourself. You only need a special version of Skype. But Meta’s short video of two people using their device is a fascinating glimpse of what our future work and learning environments will look like.

Meta and the Future

Meta’s approach right now is to get it into the hands of developers even if that means being tethered to a computer. But they’re banking on the technology shrinking with each generation, eventually cutting the cord. In the not too distant future, augmented reality devices will look like our current eyewear.

In the rush of AR developments here, don’t forget Magic Leap, the secretive company with $4.5 Billion in funding and a device that projects a light field into your eyes. The developments in augmented reality are moving faster than we realize.

At the recent TED 2016 conference, Meta CEO Meron Gribetz shared his vision of what augmented reality devices will look like:

. . . in about 5 years, these are all going to look like strips of glass on our eyes that project holograms. And just like we don’t care so much about which phone we buy in terms of the hardware; we buy it for the operating system, as a neuroscientist, I always dreamt of building the IOS of the mind, if you will.

Our glasses will be both fashion accessories and learning devices. And when I ask you about that new pair of Ralph Lauren shades you bought for your summer weekends, what I’ll really want to know is what OS they run. And what apps you’re using.

As Meta notes, this is a fundamental shift in the computing paradigm we’ve lived with for the past thirty years. Digital objects and actions will no longer be limited to our screens but embedded in our everyday world. Our workspaces and learning environments will be transformed in ways limited only by our imaginations.

Emory Craig is a writer, speaker, and consultant specializing in virtual reality (VR) and generative AI. With a rich background in art, new media, and higher education, he is a sought-after speaker at international conferences. Emory shares unique insights on innovation and collaborates with universities, nonprofits, businesses, and international organizations to develop transformative initiatives in XR, GenAI, and digital ethics. Passionate about harnessing the potential of cutting-edge technologies, he explores the ethical ramifications of blending the real with the virtual, sparking meaningful conversations about the future of human experience in an increasingly interconnected world.